System Architecture

The agent follows a research → write → validate → review → learn cycle. Admin edits become distilled guidelines that shape future generations — a continuous learning loop.

Data Sources

Every report combines four independent data streams. The AI never invents numbers — prices, routes, and deadlines come from verified sources.

ERP (PostgreSQL)

Database efi_erp_2025_14 on DigitalOcean. Supplies:

- FOB prices from

supplier_purchase_orders(781 POs) - Transit times from

ref_otw_transit_times(84 port pairs) - Ocean freight rates from

ref_landed_cost_estimates - Sourcing deadlines from

supplier_capacities

CRM (Airtable)

12 fetchers pull customer-facing data:

- Customer profile from Customers table

- Monthly snapshot, YTD totals from Sales Orders

- Sourcing analysis, pricing recap from Contract Line Items

- Product logos from Products table

Web Research (Claude)

Pass 1 uses Claude Sonnet 4.6 with the web_search tool to perform up to 20 live searches across 9 research categories:

- US tariffs & trade policy (HTS 3823, 1511, 1516)

- Palm oil pricing (CPO, stearin, PFAD, palmitic acid)

- Ocean freight indices (FBX01, FBX03)

- Currency, supply/demand, weather, geopolitical

- US dairy market (CME butter, Class III/IV, butterfat)

Price History (Accumulated)

Rolling 12-month commodity price archive, updated each generation run:

- CPO (Bursa Malaysia, MYR/MT)

- Palm Stearin (USD/MT)

- PFAD (USD/MT)

- Palmitic Acid (USD/MT)

Stored in knowledge/price-history.json — feeds the commodity pricing chart on the report.

Sample reports as training data.

Historical PDFs in data/samples/ (Oct 2024, Nov 2024, Aug 2021, Dec 2018)

defined the report structure, section categories, bullet format, and narrative voice.

These informed the prompt engineering — the AI is instructed to match this specific style.

AI Pipeline — Three Passes

Each monthly report requires exactly 9 API calls across two AI providers. Using a separate model for validation eliminates self-confirmation bias.

Pass 1 — Research Agent

Model: Claude Sonnet 4.6 with web_search tool

Calls: 1 (multi-turn with up to 20 searches)

Tokens: ~8K input / ~4K output

The research agent executes a structured search plan across 9 priority-ordered categories.

It returns a typed MarketResearch JSON with every data point sourced —

including the URL and retrieval date.

Pass 2 — Narrative Writer

Model: Claude Sonnet 4.6 (no web search)

Calls: 7 sequential (one per section)

Tokens: ~18K input / ~1.8K output

Temperature: 0.3 (deterministic)

Each section call receives the same base context (research JSON + ERP data + memory + guidelines) plus section-specific instructions. The writer never searches — it only shapes existing data into narrative.

Pass 3 — Validation Agent

Model: Google Gemini 2.5 Flash (independent provider)

Calls: 1

Tokens: ~8K input / ~600 output

A separate AI provider cross-checks every narrative claim against the research JSON. Using Gemini (not Claude) eliminates self-confirmation bias — the validator has no knowledge of how the narrative was generated.

Overall confidence = 40% rule-based data quality + 60% Gemini AI validation (0–100)

Prompt Engineering

Each narrative call injects four layers of context into the prompt. This is how the system learns — prior corrections shape future output without retraining the model.

The system stores a summary for each prior month: market direction (bearish/bullish/neutral), key prices, top themes, and admin feedback scores. Injected as:

Direction: bullish

CPO: 4,186 MYR/MT (up)

Themes: tariff uncertainty, freight tightening"

Stored in .narrative-cache/agent-memory.json — max 12 months

Every admin edit generates a correction entry. Claude Haiku periodically distills the full correction log into max 25 actionable rules, grouped by section.

• Always include specific dates

• Never hedge with 'seems' or 'appears'

• Max 200 chars per bullet point"

Stored in knowledge/corrections-guidelines.json — version-controlled

Real business data from EFI's systems. The AI uses these as ground truth for prices, routes, and deadlines — never inventing numbers.

FOB: MagnaPalm $845/MT (-2.1%)

Route: Belawan→Houston 32 days

Deadline: April orders by Mar 15"

Extracted live from PostgreSQL + Airtable

Hard-coded in the system prompt, derived from the sample PDFs in data/samples/.

Defines how the AI writes — tone, length, formatting, audience.

• Max 200 chars per bullet

• Use 'EFI' not 'we'

• Confident: 'we expect' not 'seems'"

Hard-coded in narrative-writer.ts system prompt

Voice rules are embedded in the system prompt.

The writer is instructed to be "professional but accessible, written for dairy farmers" —

confident ("we expect" not "it seems"), specific with numbers, action-oriented,

and reassuring even with bad news. Max 200 chars per bullet. Use "EFI" not "we".

These rules were derived from the sample PDFs uploaded to data/samples/.

The Learning Loop

Every admin interaction with the report creates a feedback signal that shapes future reports. This is the core of the agentic pattern — the system gets better with each cycle.

Admin rewrites a paragraph. Original vs. edited text becomes a correction entry.

"Make this more specific about tariffs." The instruction + result teaches the system what detail level is expected.

Hidden sections signal content the AI should de-prioritize. Feedback scores track approval.

AI condenses to ~50% or expands to ~150%. Teaches preferred section length.

Create custom sections via AI prompt or manual text. ADDED badge, reordering, PDF support, delete with confirm.

Corrections Distillation Process

The original AI text and the admin's corrected version are stored in corrections-log.json

with a category (factual, tone, structure, omission, emphasis) and explanation.

Claude Haiku reads the full correction log and distills it into max 25 actionable rules, grouped by section (general, assessment, topNews, priceFactors, oceanFreight, actionItems, asiaInsight). Max 5 rules per section to keep prompts focused.

Distilled rules are saved as a versioned JSON file. This means the learning is transparent, auditable, and survives code deploys.

The next time a report is generated, all 25 rules are injected as EDITORIAL GUIDELINES

in the system prompt. The AI follows these rules alongside the voice guidelines from the sample reports.

Cross-month memory is separate from corrections. Memory tracks what happened (prices, direction, themes) for continuity. Guidelines track how to write (rules, style, emphasis) for quality. Both are injected but serve different purposes.

LLM Stack & Cost

| Component | Model | Calls | Input Tokens | Output Tokens | Cost |

|---|---|---|---|---|---|

| Research Agent | Claude Sonnet 4.6 | 1 (+ web search) | ~8,000 | ~4,000 | $0.07 |

| Narrative Writer | Claude Sonnet 4.6 | 7 sequential | ~18,000 | ~1,800 | $0.07 |

| Validation | Gemini 2.5 Flash | 1 | ~8,000 | ~600 | <$0.01 |

| Corrections Distillation | Claude Haiku 4.5 | 1 (on-demand) | varies | ~1,500 | <$0.01 |

| Total per run | 9 | ~34,000 | ~6,400 | ~$0.14 |

Caching & Orchestration

Reports are generated once per month and cached on disk. The orchestration layer handles deduplication, rate limiting, and failure recovery.

Request Deduplication

If multiple users request the same month's report simultaneously, a single AI generation runs and all callers share the same Promise. Prevents redundant API calls and rate limit issues.

Failure Cooldown

After a generation failure (API error, rate limit), a 5-minute cooldown prevents repeated hammering. Exponential backoff on 429 errors (30s, 60s, 120s).

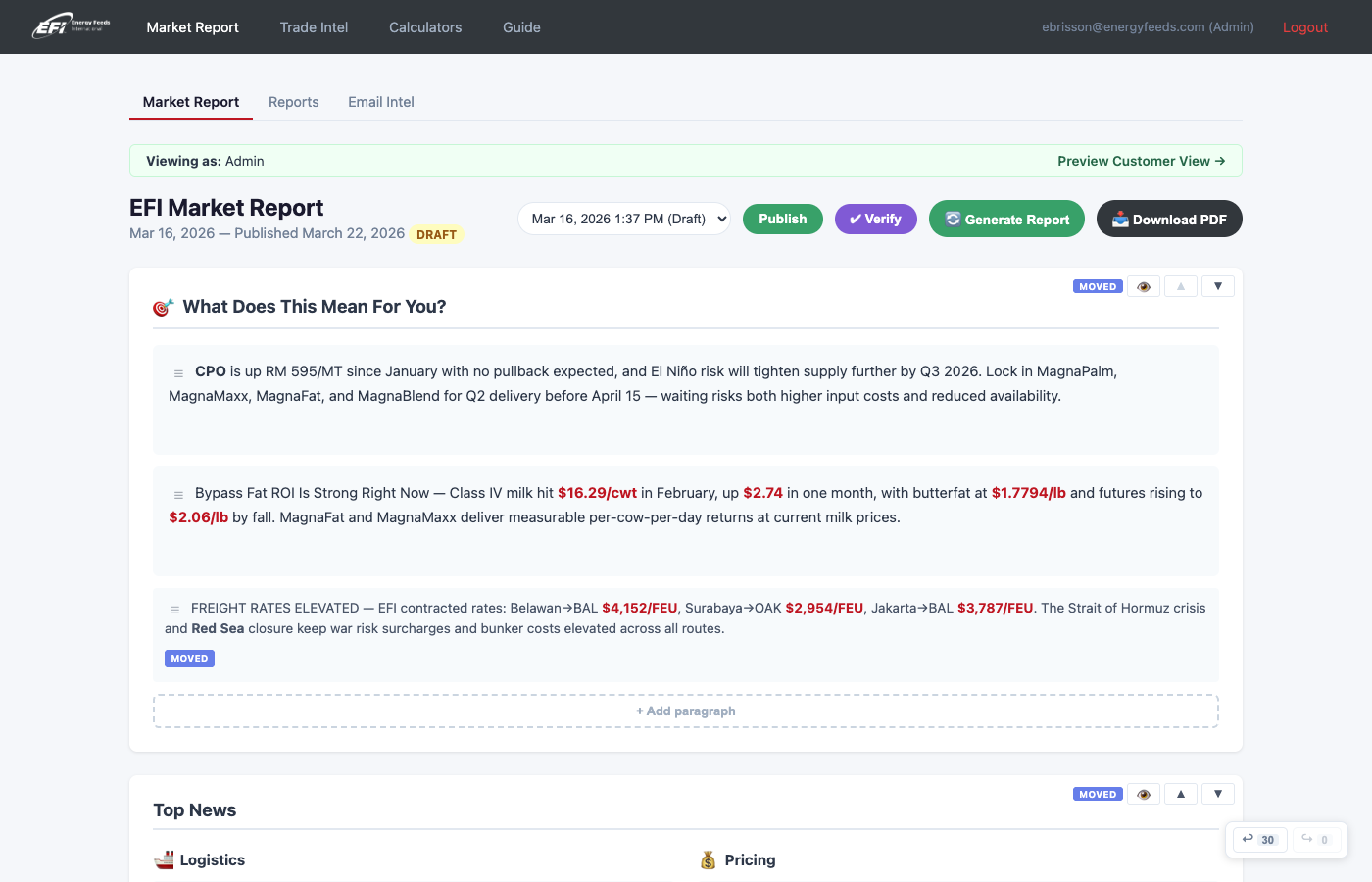

Admin Editing & Workflow

Rich editorial controls let admins shape AI-generated reports before publishing to customers. Every admin action participates in the learning loop.

Rich Text Editor

Contenteditable + toolbar with bold, italic, underline, and color formatting. Stored as HTML in editorialOverrides. Figures auto-highlighted in non-rich text.

Undo / Redo

Full undo/redo stack for editorial changes. POST /api/undo and POST /api/redo

restore previous states. GET /api/undo-status returns stack counts.

Version History

Every editorial change is versioned. GET /api/version-history shows all versions

for a section. POST /api/activate-version restores a specific version.

Content Consistency Checker

Gemini-powered verification of admin edits against the full report. Pre-publish gate: blocks on errors, allows warnings. Purple section highlighting.

Draft / Published Workflow

Reports start as Draft (admin-only). Admin publishes to make visible to customers.

Unpublish reverts to draft. Status tracked in report-status.json.

Section & Item Controls

Hide/unhide sections and individual items. Reorder sections and items within sections. Move items between sections. Add custom AI-generated or manual sections with ADDED badge.

Customer Insights Server (Port 3001)

A separate Express server for data-only customer dashboards. No AI dependency —

starts instantly and runs without ANTHROPIC_API_KEY.

Customer Dashboard

Per-customer insights: monthly snapshot, YTD totals, pricing recap, sourcing analysis,

purchase chart with trailing 12-month data, product logos, and loadout schedule.

All 12 data fetchers run in parallel via Promise.all().

PDF Export

Full customer dashboard as PDF via Puppeteer. Admin picker lets you select any customer + month combination. Customer list based on trailing 12-month fulfilled tonnage.

Two independent servers, one package.

Market Report (port 3000) and Customer Insights (port 3001) share fetchers, views, and auth code

but have different route sets. Start with npm run market and npm run insights.

Hosted Modules (Port 3000)

The market report server hosts several additional modules alongside the core market report. Desktop top nav: Market Report | Trade Intel | Calculators | Guide.

Trade Intelligence

Competitive analysis from Datamyne BOL records: dashboard, importer/supplier rankings,

classification watch, entity resolution, and brand discovery.

Routes at /trade-intel/*.

Calculators

Milk Pricing & ROI (live USDA data, EFI sales overlay, AI narrative), Fat Blending (LP optimizer, Product Database targets), Product Database (60+ products, CRUD, 9 categories).

Mobile PWA

Progressive Web App at /m/* routes. Bottom tab bar:

Reports | Intel | Calc.

Service worker (network-first), Add to Home Screen from Safari.

Intel and Calc sub-pages via pill bars.

Supplier Intel

IMAP email poller for supplier market intelligence (GNNH, RIM, etc.).

AI extraction, formatted detail views, reprocess/delete controls.

Routes at /supplier-intel/*.

Supplier Intel Email Poller

IMAP-based email ingestion for supplier market intelligence. Automatically polls for emails from key suppliers (GNNH, RIM, etc.), extracts structured data via AI, and presents formatted detail views.

Two-Phase Fetch

Envelopes downloaded first (fast), then full message source per message. 90s socket timeout, 30s greeting/connection. Scans last 50 messages by sequence number.

HTML Extraction

Handles base64 decoding, quoted-printable encoding, HTML tag stripping, and Outlook forwarded emails with nested MIME parts.

Formatted Detail Views

Source-specific formatting: GNNH (prices table, FOB, production, outlook), RIM (tariffs, AD/CVD, impact), Other (summary, data points). Icon buttons (eye/refresh/trash) with custom confirm dialogs.

Reprocess & blocklist. The reprocess button re-runs AI extraction with improved text decoding on any previously ingested email. Deleted emails are added to a blocklist to prevent re-ingestion on the next poll cycle.

Key Source Files

| File | Purpose | Lines |

|---|---|---|

fetchers/market-narrative.ts |

Orchestration — caching, dedup, queue, pipeline execution | ~1,500 |

narrative/research-agent.ts |

Pass 1 — Claude + web_search, 9 research categories | ~400 |

narrative/narrative-writer.ts |

Pass 2 — 7 sequential section generators + shrink/expand/re-prompt | ~600 |

narrative/validation-agent.ts |

Pass 3 — Gemini cross-check, confidence scoring | ~200 |

narrative/memory.ts |

Cross-month memory — prices, direction, themes, feedback | ~150 |

narrative/corrections.ts |

Correction log + Haiku distillation → 25 editorial rules | ~200 |

narrative/types.ts |

Section definitions, feedback types, editorial overrides | ~250 |

narrative/confidence-score.ts |

Rule-based data quality scoring (40% of overall confidence) | ~150 |

narrative/consistency-checker.ts |

Gemini pre-publish gate — flags contradictions in edited reports | ~150 |

supplier-intel/email-poller.ts |

IMAP email polling — two-phase fetch, HTML extraction, delete blocklist | ~400 |

supplier-intel/extractor.ts |

AI extraction — GNNH/RIM/Other format detection, structured data output | ~300 |

knowledge/corrections-guidelines.json |

Distilled editorial rules (max 25) — version-controlled | — |

data/samples/*.pdf |

Historical reports that defined voice, structure, bullet format | — |